I am no longer regularly updating my list of publications. For an up-to-date record, please refer to Google Scholar.

Impact of Privacy Protection Methods of Lifelogs on Remembered Memories

Passant ElAgroudy, Mohamed Khamis, Florian Mathis, Diana Irmscher, Ekta Sood, Andreas Bulling, Albrecht Schmidt

In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems Hamburg, Germany, April 2023 (CHI 2023)

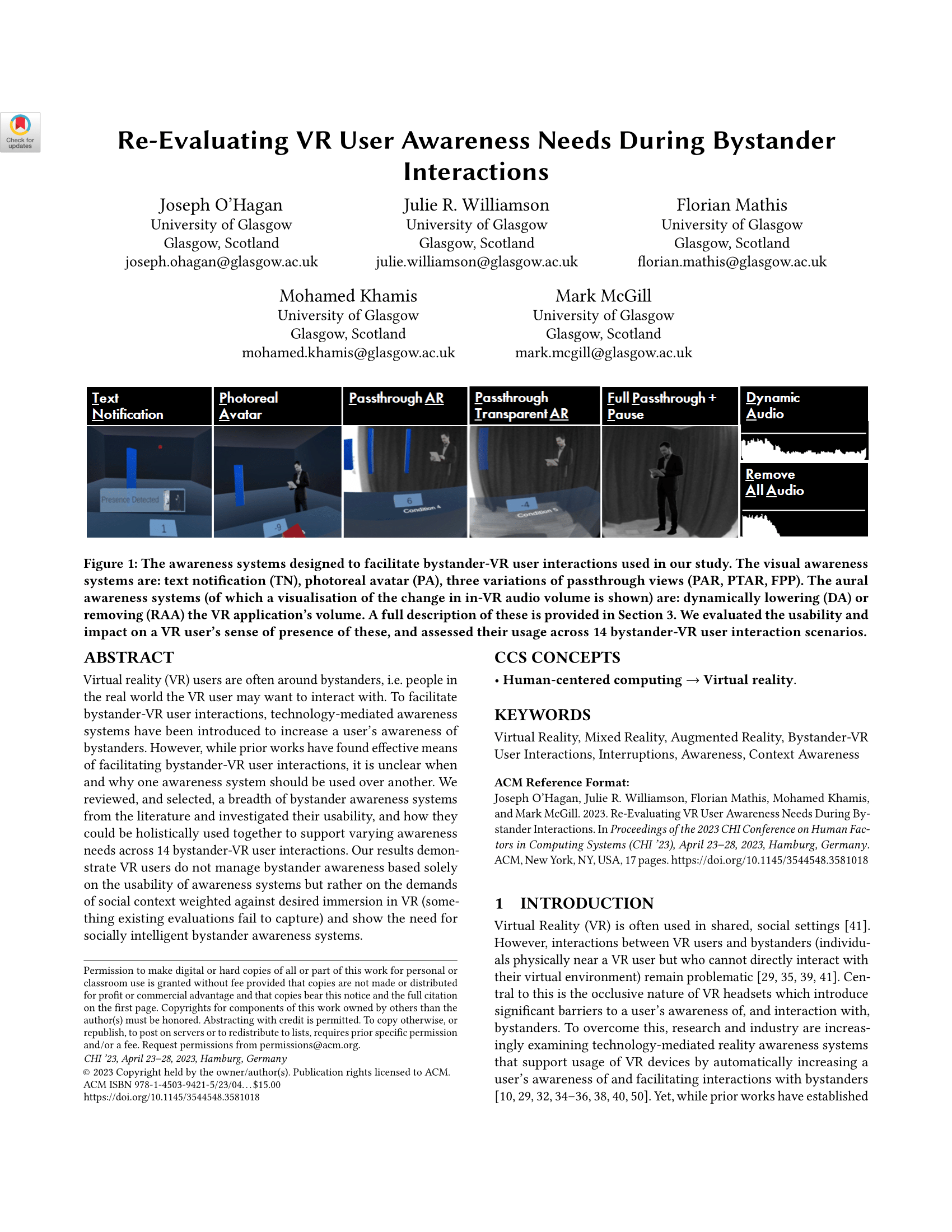

Re-Evaluating VR User Awareness Needs During Bystander Interactions

Joseph O'Hagan, Julie R. Williamson, Florian Mathis, Mohamed Khamis, Mark McGill

In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems Hamburg, Germany, April 2023 (CHI 2023)

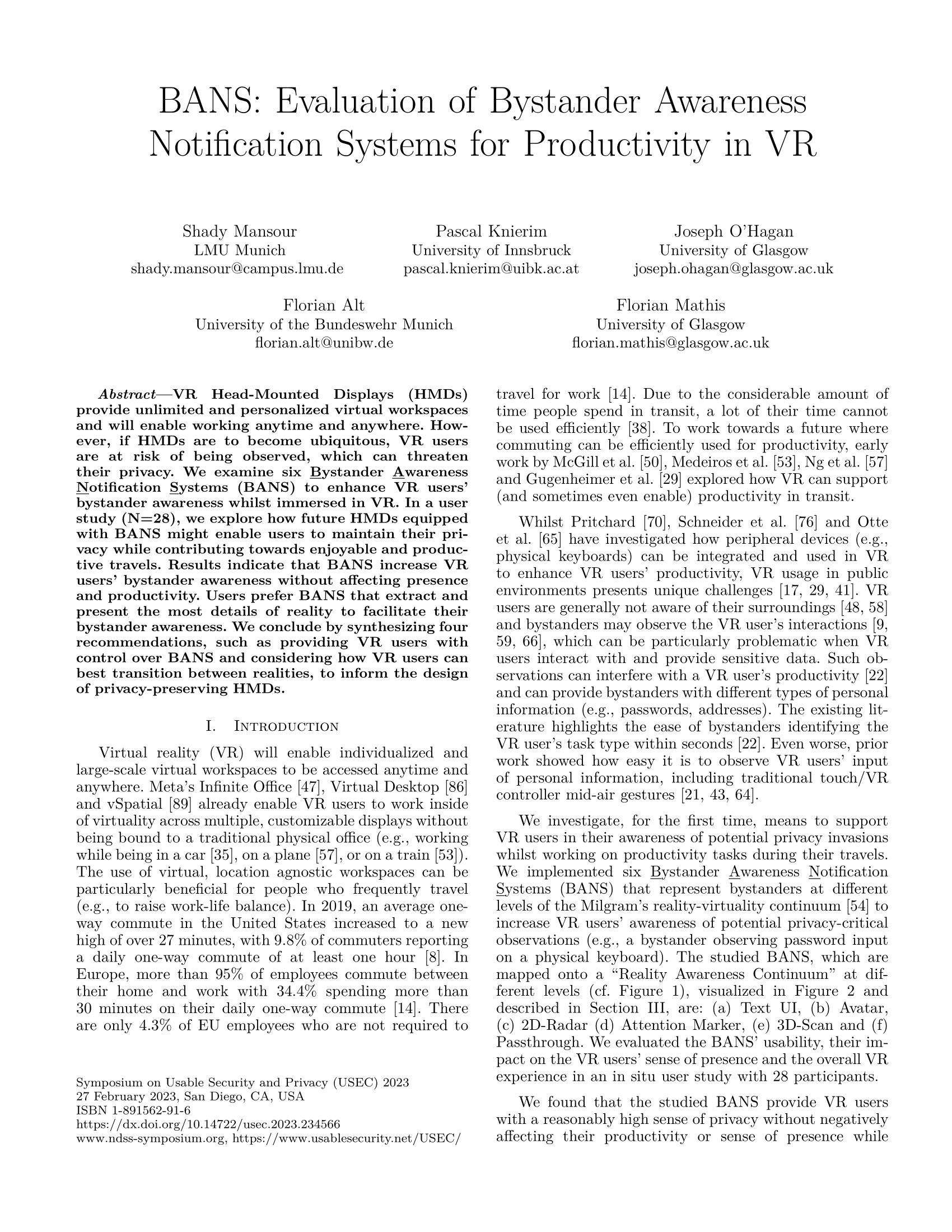

BANS: Evaluation of Bystander Awareness Notification Systems for Productivity in VR

Shady Mansour, Pascal Knierim, Joseph O'Hagan, Florian Alt, Florian Mathis

Usable Security and Privacy (USEC) Symposium 2023 in conjunction with Network and Distributed System Security (NDSS 2023) San Diego, California, USA, February 2023 (USEC 2023)

Moving Usable Security and Privacy Research Out of the Lab: Adding Virtual Reality to the Research Arsenal

Florian Mathis

Lightning Talk at The Eighteenth Symposium on Usable Privacy and Security (SOUPS 2022) Boston, MA, USA, August 2022 (SOUPS 2022)

Stay Home! Conducting Remote Usability Evaluations of Novel Real-World Authentication Systems Using Virtual Reality

Florian Mathis, Joseph O'Hagan, Kami Vaniea, Mohamed Khamis

To Appear In Proceedings of the International Conference on Advanced Visual Interfaces (AVI 2022) Frascati, Rome, Italy, June 2022 (AVI 2022)

CueVR: Studying the Usability of Cue-based Authentication for Virtual Reality

Yomna Abdelrahman, Florian Mathis, Pascal Knierim, Axel Kettler, Florian Alt, Mohamed Khamis

To Appear In Proceedings of the International Conference on Advanced Visual Interfaces (AVI 2022) Frascati, Rome, Italy, June 2022 (AVI 2022)

VRception: Rapid Prototyping of Cross-Reality Systems in Virtual Reality

Uwe Gruenefeld, Jonas Auda, Florian Mathis, Stefan Schneegass, Mohamed Khamis, Jan Gugenheimer, Sven Mayer

In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems New Orleans, LA, May 2022 (CHI 2022)

The Feet in Human-Centred Security: Investigating Foot-Based User Authentication for Public Displays

Kieran Watson, Robin Bretin, Mohamed Khamis, Florian Mathis

In Proceedings of the 2022 CHI Conference Extended Abstracts on Human Factors in Computing Systems (CHI EA 2022) New Orleans, LA, May 2022 (CHI 2022)

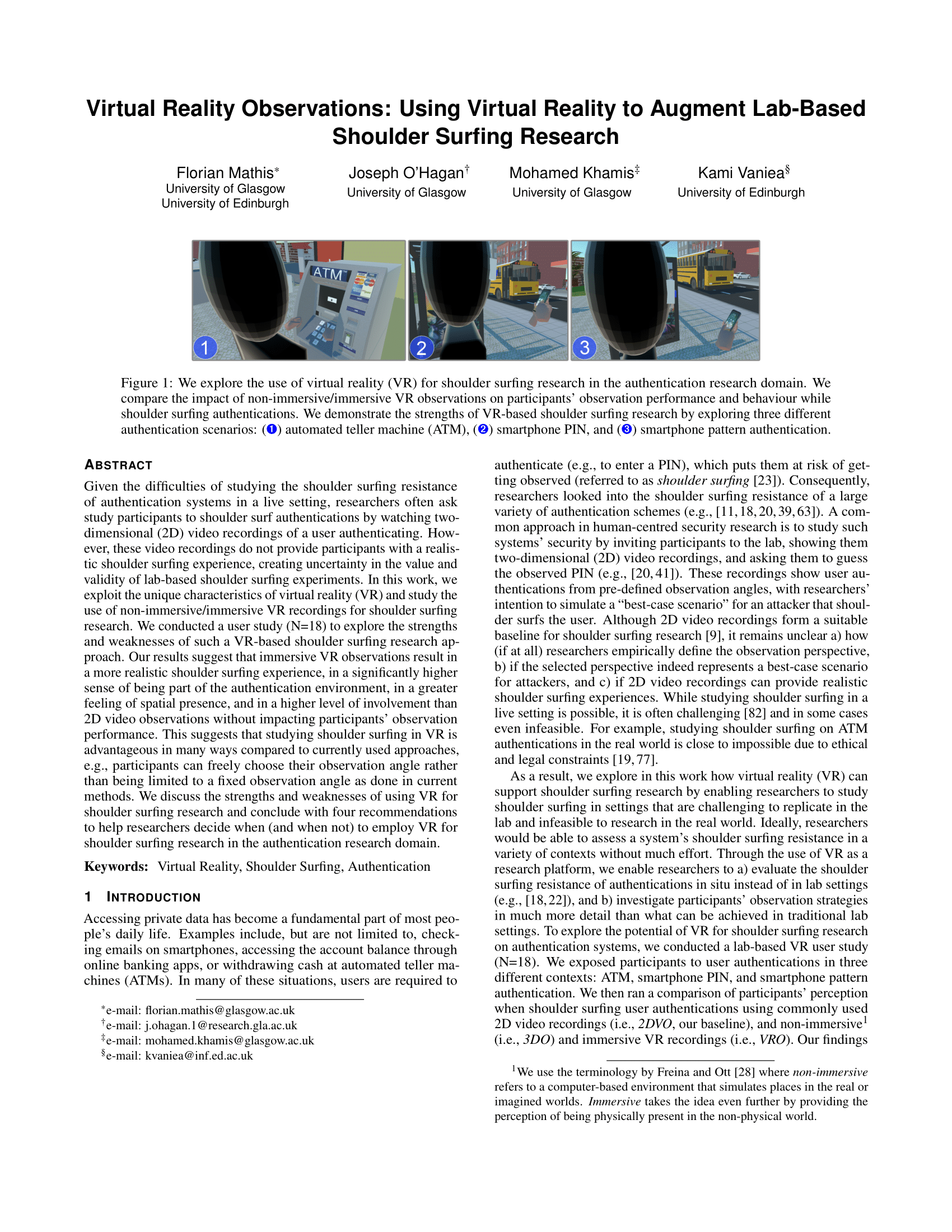

Virtual Reality Observations: Using Virtual Reality to Augment Lab-Based Shoulder Surfing Research Best Conference Track Paper Nominee

Florian Mathis, Joseph O'Hagan, Mohamed Khamis, Kami Vaniea

In Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (VR) Christchurch, New Zealand, March 2022 (IEEE VR 2022)

Can I Borrow Your ATM? Using Virtual Reality for (Simulated) In Situ Authentication Research

Florian Mathis, Kami Vaniea, Mohamed Khamis

In Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (VR) Christchurch, New Zealand, March 2022 (IEEE VR 2022)

Using Personal Data to Support Authentication: User Attitudes and Suitability

Jolie Bonner, Joseph O’Hagan, Florian Mathis, Jamie Ferguson, Mohamed Khamis

International Conference on Mobile and Ubiquitous Multimedia (MUM 2021)

Prototyping Usable Privacy and Security Systems: Insights from Experts

Florian Mathis, Kami Vaniea, Mohamed Khamis

International Journal of Human–Computer Interaction (2021)

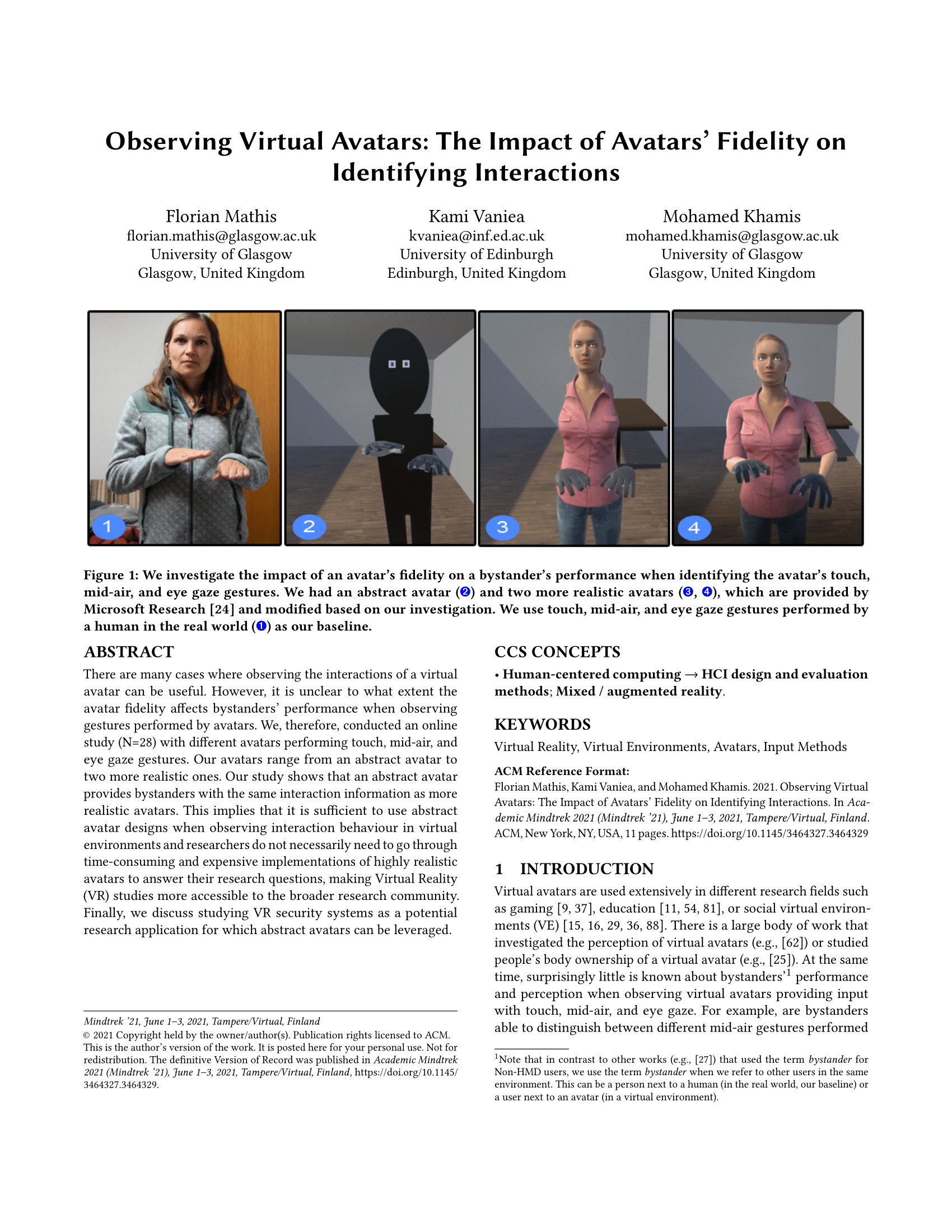

Observing Virtual Avatars: The Impact of Avatars' Fidelity on Identifying Interactions

Florian Mathis, Kami Vaniea, Mohamed Khamis

In AcademicMindtrek '21: Proceedings of the 24th International Academic Mindtrek Conference

Tampere, Finland, June 2021 (Mindtrek 2021)

Remote XR Studies: The Golden Future of HCI Research?

Florian Mathis, Xuesong Zhang, Joseph O'Hagan, Daniel Medeiros, Pejman Saeghe, Mark McGill, Stephen Brewster, Mohamed Khamis

In CHI 2021 Workshop on XR Remote Research: The First Remote XR Research Workshop Yokohama, Japan, May 2021 (CHI 2021)

GazeWheels: Recommendations for using Wheel Widgets for Feedback during Dwell-time Gaze Input

Misahael Fernández Moncayo, Florian Mathis, Mohamed Khamis

Special issue “Physiological Computing” -- Journal of Information Technology (2021)

RepliCueAuth: Validating the Use of a Lab-Based Virtual Reality Setup for Evaluating Authentication Systems

Florian Mathis, Kami Vaniea, Mohamed Khamis

In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems Yokohama, Japan, May 2021 (CHI 2021)

[DC] VirSec: Virtual Reality as Cost-Effective Test Bed for Usability and Security Evaluations

Florian Mathis

In Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces Lisbon, Portugal March 2021 (IEEE VR 2021)

Fast and Secure Authentication in Virtual Reality using Coordinated 3D Manipulation and Pointing

Florian Mathis, John H Williamson, Kami Vaniea, Mohamed Khamis

ACM Transactions on Computer-Human Interaction (ACM ToCHI) presentation venue: CHI 2021, Yokohama, Japan

GazeWheels: Comparing Dwell-time Feedback and Methods for Gaze Input

Misahael Fernández Moncayo, Florian Mathis, Mohamed Khamis

In Proceedings of the Nordic forum for Human-Computer Interaction (NordiCHI), 14.7% acceptance rate Tallin, Estonia, October 2020 (NordiCHI 2020)

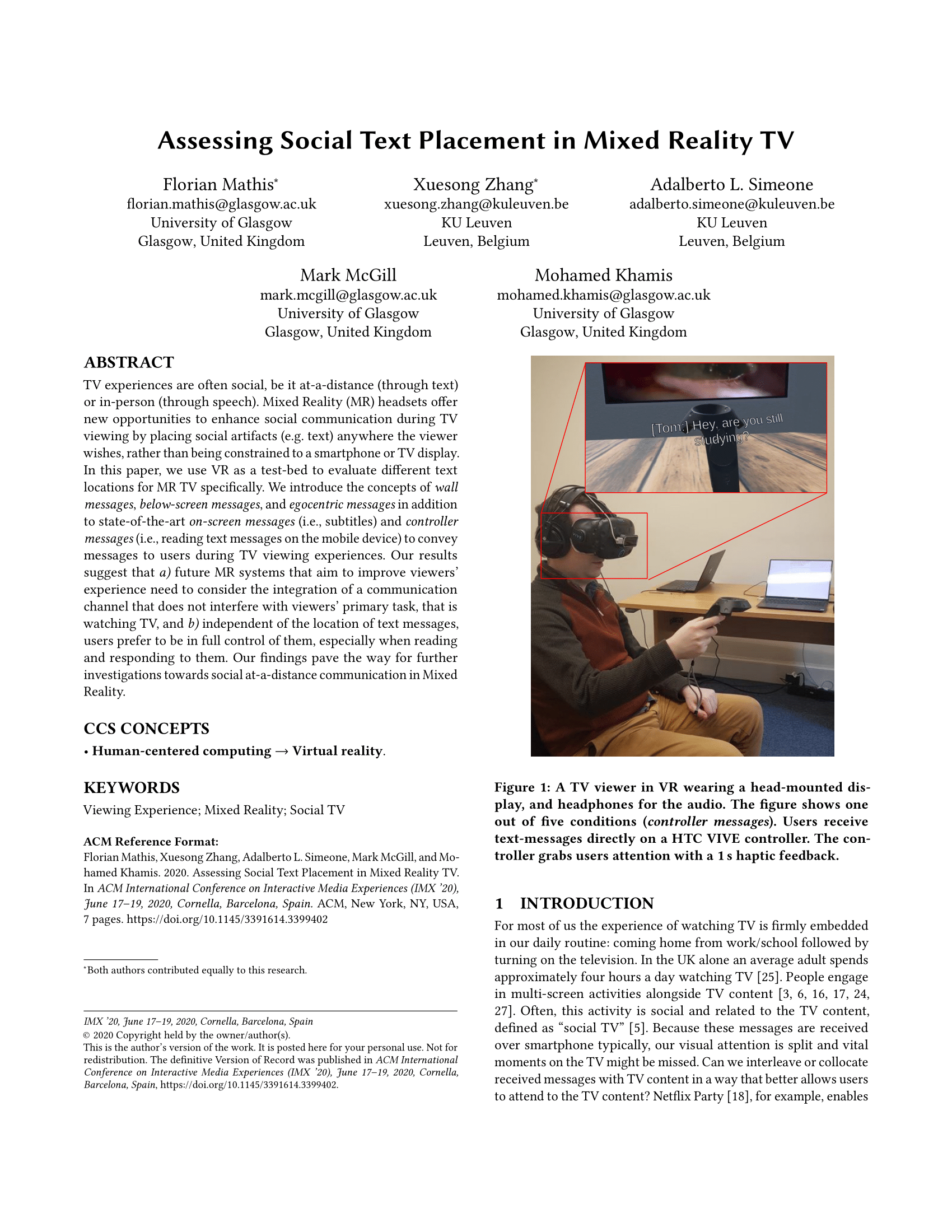

Assessing Social Text Placement in Mixed Reality TV Best Late-Breaking-Work Award (top 7.14% of accepted submissions)

Florian Mathis, Xuesong Zhang, Mark McGill, Adalberto L. Simeone, Mohamed Khamis

In Proceedings of the International Conference on Interactive Media Experiences Barcelona, Spain, June 2020 (IMX 2020)

Augmenting TV Viewing using Acoustically Transparent Auditory Headsets

Mark Mcgill, Florian Mathis, Julie Williamson, Mohamed Khamis

In Proceedings of the International Conference on Interactive Media Experiences Barcelona, Spain, June 2020 (IMX 2020)

Shared and Synchronous Mixed Reality Experiences

Mark Mcgill, Florian Mathis, Stephen Brewster

In CHI 2020 Workshop on SocialVR 2020: Social Virtual Reality Workshop Honolulu, Hawaiʻi, USA, April 2020 (CHI 2020)

RubikAuth: Fast and Secure Authentication in Virtual Reality

Florian Mathis, John H Williamson, Kami Vaniea, Mohamed Khamis

In Proceedings of the 2020 CHI Conference Extended Abstracts on Human Factors in Computing Systems Honolulu, Hawaiʻi, USA, April 2020 (CHI 2020)

Knowledge-driven Biometric Authentication in Virtual Reality

Florian Mathis, Hassan Ismail Fawaz, Mohamed Khamis

In Proceedings of the 2020 CHI Conference Extended Abstracts on Human Factors in Computing Systems Honolulu, Hawaiʻi, USA, April 2020 (CHI 2020)

Privacy, Security and Safety Concerns of using HMDs in Public and Semi-Public Spaces

Florian Mathis, Mohamed Khamis

In Proceedings of the CHI 2019 Workshop on Challenges Using Head-Mounted Displays in Shared and Social Spaces Glasgow, Scotland, UK, May 2019 (CHI 2019)

Can Privacy-Aware Lifelogs Alter Our Memories?

Passant ElAgroudy, Mohamed Khamis, Florian Mathis, Diana Irmscher, Andreas Bulling, Albrecht Schmidt

In Proceedings of the 2019 CHI Extended Abstracts Conference on Human Factors in Computing Systems Glasgow, Scotland, UK, May 2019 (CHI 2019)

The Bird is the Word: A Usability Evaluation of Emojis inside Text Passwords Honourable Mention Award

Tobias Seitz, Florian Mathis, Heinrich Hussmann

In Proceedings of the 29th Australian Conference on Human-Computer Interaction Brisbane, QLD, Australia, November 2017 (OzCHI 2017)